Here's a scene that plays out constantly across the industry: a candidate with five years of hands-on experience — CTF wins, real incident response work, self-built home labs — walks into a security interview. They're asked to define the CIA triad, explain the difference between IDS and IPS, and describe what happens during a TCP handshake.

They get the job. Or they don't. Either way, neither the company nor the candidate learned anything useful from the process.

The cybersecurity hiring process is, in many organizations, genuinely broken. And the damage isn't abstract — it leads to bad hires, missed talent, and security teams that look good on paper but struggle when things get real.

The Root Problem: We're Testing for the Wrong Things

Most security interviews are built around knowledge recall. Can you define a term? Can you name a framework? Can you recite the steps of the NIST incident response lifecycle?

That stuff matters — baseline knowledge is real. But it's not what separates a good analyst from a great one. What separates them is what they do under pressure, with incomplete information, when the playbook doesn't quite fit the situation in front of them.

You can't test for that with flashcard questions.

What's interesting is that this isn't a secret. Hiring managers know their interview process is imperfect. Candidates know they're being tested on things that don't match the actual job. Everyone accepts it because it's what's always been done, and because designing a better process takes actual effort.

The Certification Proxy Problem

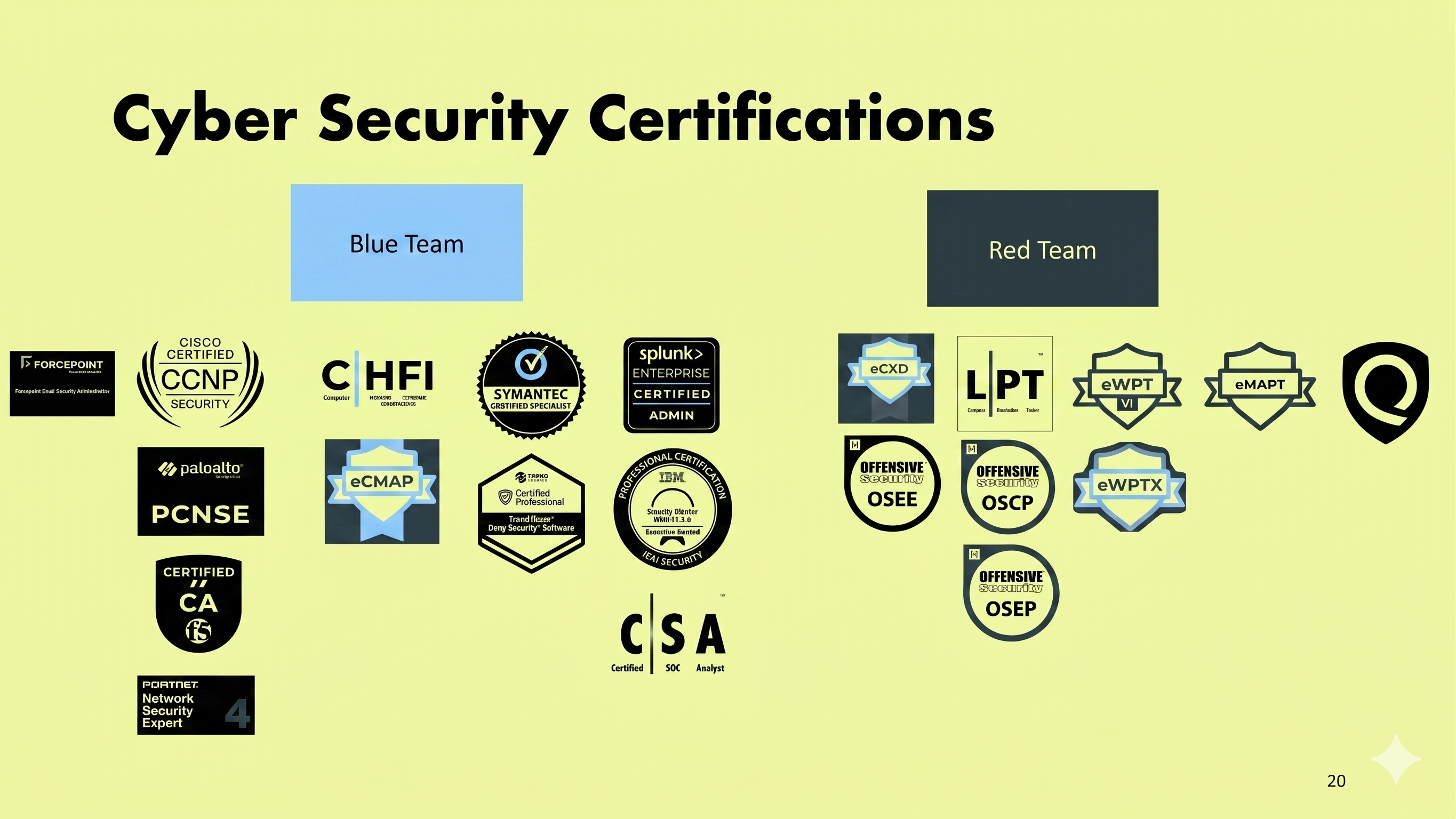

Closely related to the knowledge-recall problem is the over-reliance on certifications as a hiring filter.

Certifications aren't useless. An OSCP, a GREM, or a GIAC certification tells you something real about a candidate — they studied a body of material and passed a test designed by people who know the field. That's worth something.

But a certification is not the same as competence. Someone can memorize the material for a Security+ exam and still freeze up when they're handed an unfamiliar machine and told to find the vulnerability. Someone can fail the CompTIA exam0 three times and still be one of the sharpest threat hunters you've ever seen.

When organizations use certifications as a hard filter — "must have X certification to apply" — they're not filtering for skill. They're filtering for people who had the time, money, and access to pursue formal credentials. That's a very different thing, and it systematically disadvantages self-taught talent, career changers, and people from underrepresented backgrounds.

The Resume Black Hole

Then there's the resume problem. For most security roles, the hiring process starts with a resume review — often automated, often done by HR generalists who don't have a security background and are pattern-matching against keywords.

This filter is almost perfectly designed to eliminate non-traditional talent. It rewards people who have the "right" job titles, the "right" educational background, and the "right" company names in their history. It penalizes people who built their skills in unconventional ways — through independent research, bug bounty programs, CTF competitions, and community contributions.

The candidate who spent three years dominating CTF competitions and contributing to open-source security tools often doesn't make it past the resume screen. The candidate with a CS degree from a recognizable university and mediocre experience at a recognizable company often does.

This is backwards, and most people in security know it.

What a Better Process Looks Like

The good news is that some organizations have figured this out, and the pattern is pretty consistent across the ones doing it well.

Start with a practical challenge, not a resume screen

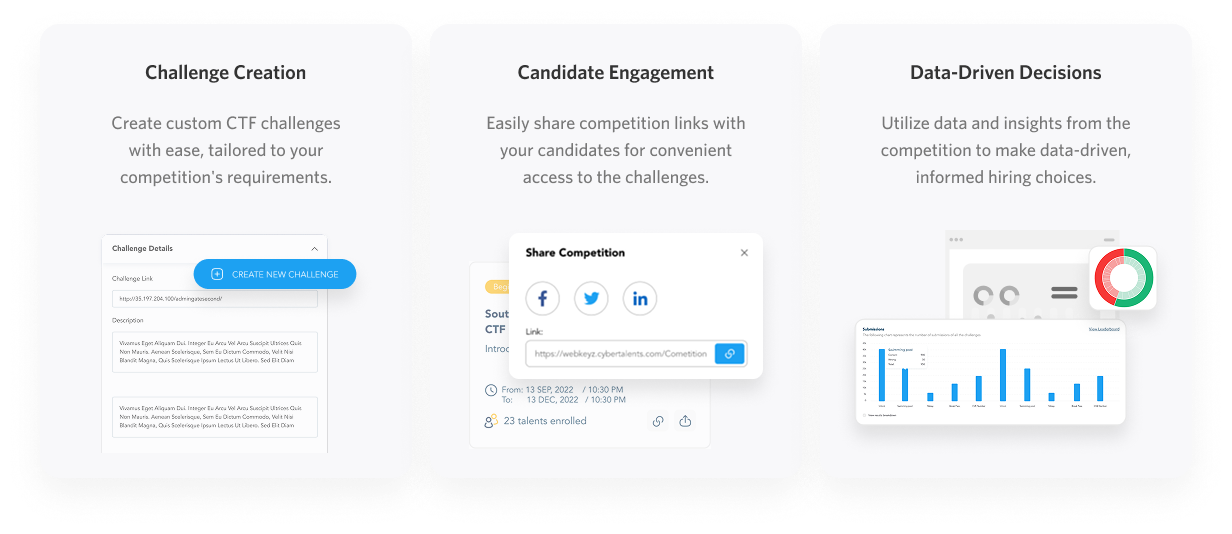

A well-designed technical challenge tells you more about a candidate in thirty minutes than a resume tells you in thirty seconds. It doesn't have to be complex — a simple CTF-style problem, a "here's a pcap file, tell me what you see" exercise, or a basic scenario response question can reveal how someone actually thinks.

This approach also opens the process to candidates who would never make it past a keyword filter. You stop hiring for pedigree and start hiring for ability.

.png)

Make the technical interview actually technical

If you're going to do a technical interview, make it technical. Give candidates a machine to explore. Show them a log file and ask them to investigate. Walk through a scenario together and ask them to make decisions out loud.

What you're looking for isn't perfection. You're looking for how they approach an unknown problem. Do they have a methodology? Do they ask the right questions? Do they stay calm when they hit a dead end? Can they explain their reasoning?

Those qualities are what actually matter on a security team, and you can only see them by watching someone work.

Value demonstrated experience differently

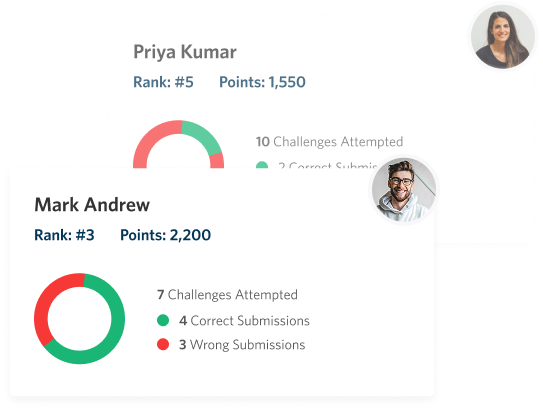

CTF rankings, bug bounty hall-of-fames, GitHub repositories, writeups, community contributions — these are real evidence of real skill. Build them into your evaluation process.

A candidate who placed top 10 in three national CTF competitions has demonstrated something concrete. Treat it that way.

Separate role-specific skills from general security knowledge

Not every security role requires the same skills. A SOC analyst, a penetration tester, and a security architect need different things. Your interview process should reflect that. Generic security interviews that don't distinguish between roles often result in hiring people who are "good at security interviews" rather than good at the specific job.

A Note on the Candidate Side

If you're on the other side of this — trying to navigate a broken process as a candidate — a few things help.

Build something you can show. A home lab, a CTF write-up, a tool you built to solve a problem, a blog post where you work through a technique. Tangible artifacts of your thinking and your skill are worth more than a polished resume.

Get into competitive environments. CTF competitions are legitimately the best way to build the kind of practical skill that stands out in interviews — and they're also a direct signal to employers who know what to look for.

And when you do get into an interview that lets you show your actual work, show your reasoning, not just your answers. The best interviewers are watching how you think, not just what you conclude.

The Bigger Picture

Fixing the cybersecurity interview process isn't just a hiring efficiency problem. It's a security problem. Teams built through broken processes have hidden skill gaps. They look capable on paper but struggle when real incidents hit. And in a field where a missed signal or a slow response can mean a catastrophic breach, that gap has real consequences.

The organizations that get this right — that hire for demonstrated competence, that use practical challenges to find non-traditional talent, that look at what people can do rather than just what they've studied — end up with stronger teams. That's not a theory. You can see it in how those teams perform when things go sideways.

Simulations Labs helps security teams and hiring organizations run practical skill assessments through CTF competitions and cyber simulations — evaluating what candidates can actually do, not just what they know.