A Grading Framework for Professors

Cybersecurity programs at universities are under growing pressure to prove their graduates are job-ready. Industry reports consistently highlight that a significant percentage of cybersecurity roles remain unfilled, not because there are no candidates, but because many graduates lack the practical, demonstrable skills employers need. The gap is not in knowledge. It is in the ability to apply that knowledge under realistic conditions.

For professors, this creates a grading challenge. Traditional exams and written assignments can test theoretical understanding, but they tell you very little about whether a student can actually detect an intrusion, analyze malware, or respond to a live incident. If cybersecurity education is moving toward hands-on, simulation-based learning, then assessment needs to follow.

This article presents a practical grading framework for professors who use CTF platforms and cyber ranges in their courses. It is designed to be adaptable across course levels, from introductory security courses to advanced capstone projects, and it maps directly to the kinds of data that platforms like Simulations Labs generate automatically.

Why Traditional Grading Falls Short in Cybersecurity

Most university grading systems were designed for disciplines where knowledge can be measured through essays, problem sets, and multiple-choice exams. Cybersecurity does not fit neatly into that model. A student might be able to define a SQL injection on an exam and still fail to identify one in a live web application. Another student might struggle to articulate cryptographic theory in writing but solve complex cryptography challenges consistently in a lab environment.

The disconnect between what we test and what matters in the field creates two problems. First, it misrepresents student ability: students who are strong practitioners may receive lower grades than peers who are better test-takers. Second, it weakens the signal that employers receive from academic transcripts: a high GPA does not reliably predict job performance in a security role.

Hands-on platforms solve the assessment problem on the input side by giving students realistic work to do. But without a clear rubric for evaluating that work, professors are left making subjective judgments or defaulting to simple completion metrics that miss the nuance of how well a student actually performed.

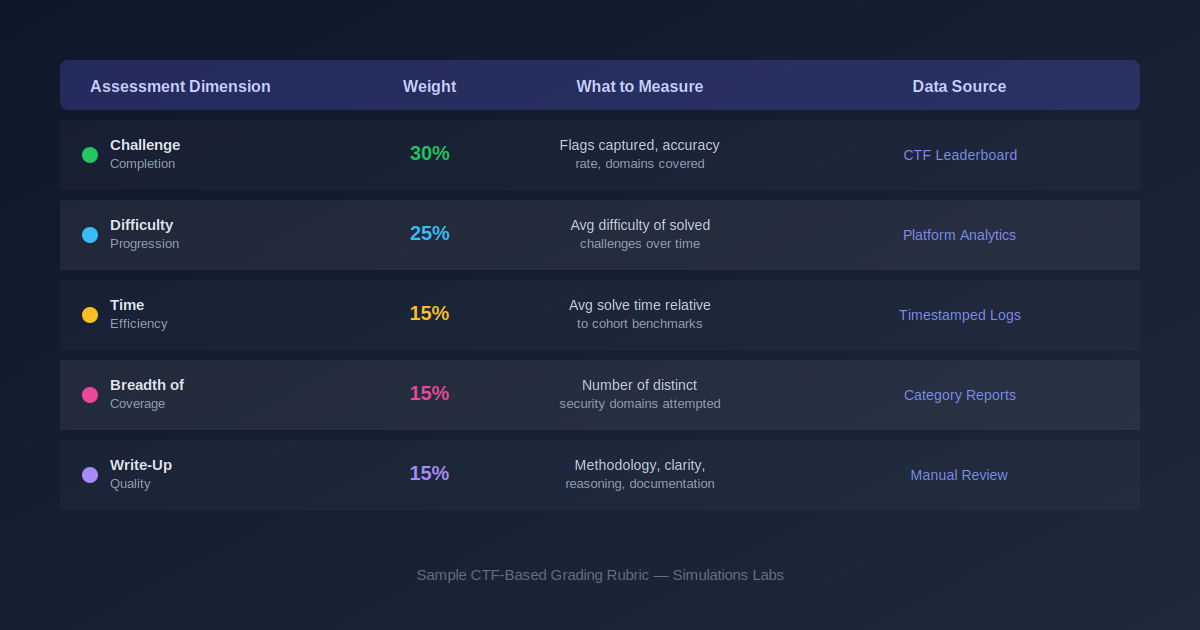

A Five-Dimension Grading Framework

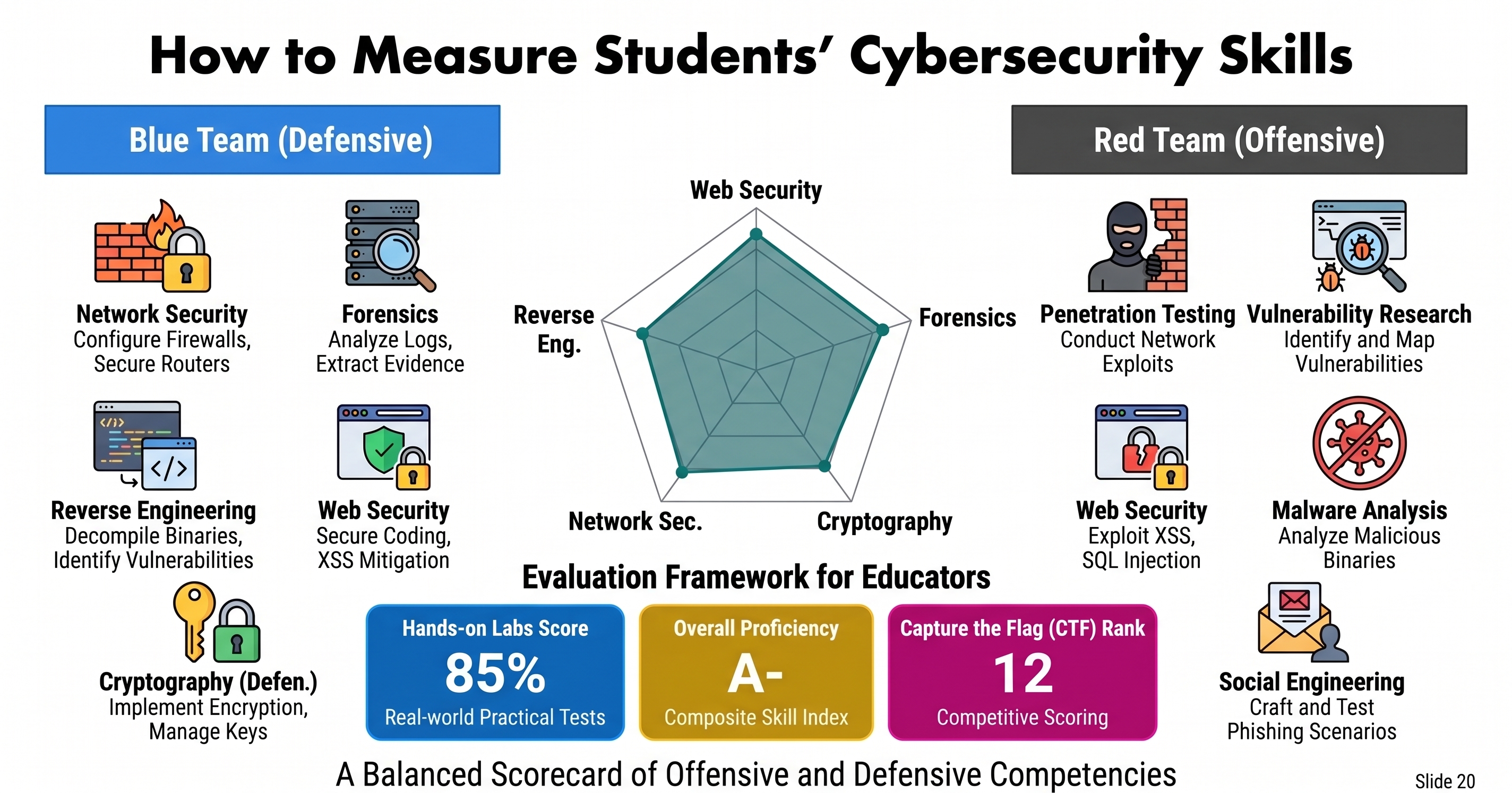

The framework below evaluates student performance across five dimensions. Each dimension captures a different aspect of cybersecurity competency, and together they provide a holistic view of what a student can do. The suggested weights are starting points and can be adjusted based on course objectives and level.

1. Challenge Completion (30%)

This is the most straightforward dimension: how many challenges did the student solve, and how accurately? It measures core technical competency and shows whether a student can apply their skills to capture flags and achieve objectives.

What to track:

-

Total flags captured relative to the total available

-

Accuracy rate (correct submissions vs. total attempts)

-

Points earned across different challenge categories

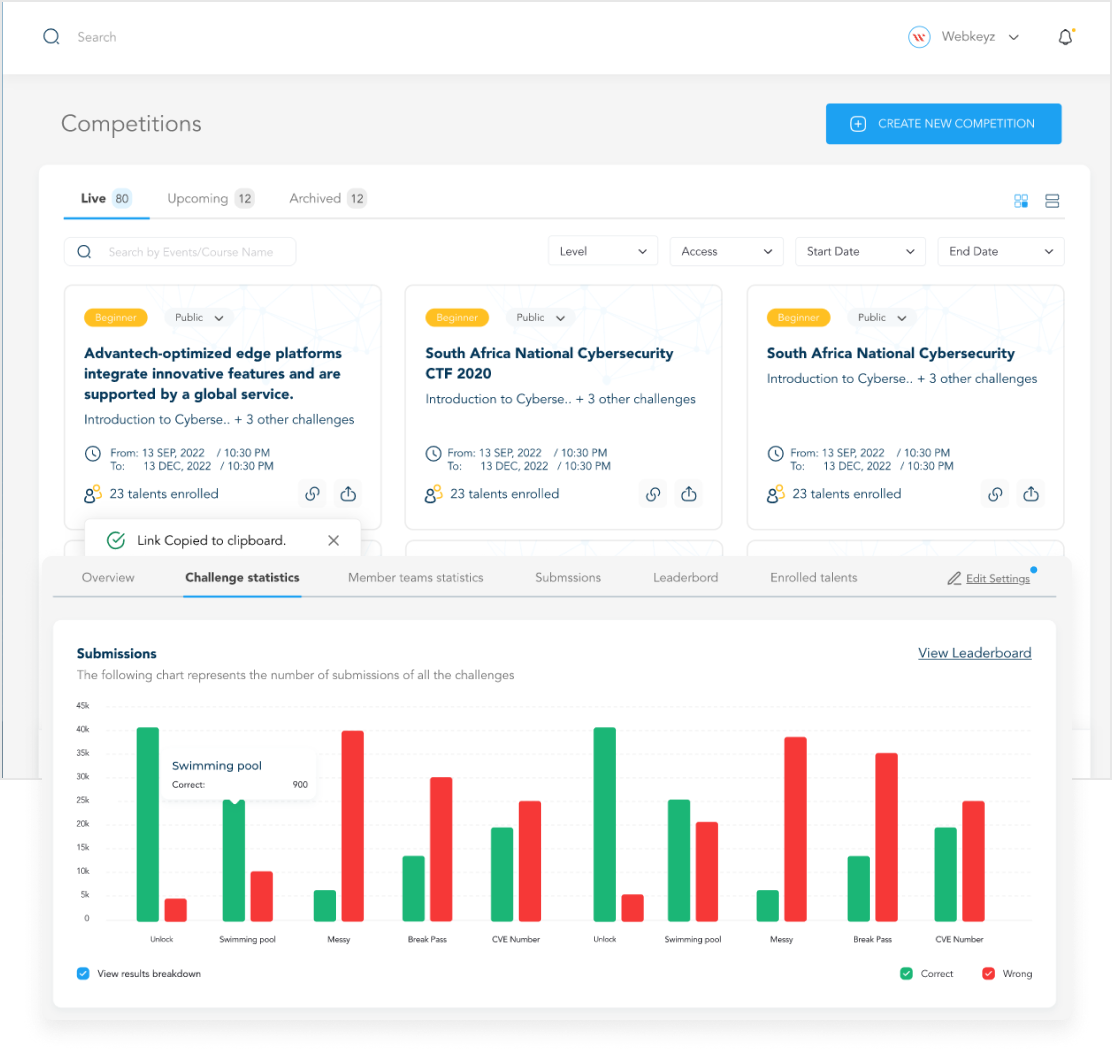

On Simulations Labs, this data is available directly from the competition leaderboard, which can be exported as CSV, Excel, or PDF. Professors can pull completion data for an entire class in minutes rather than compiling it manually.

2. Difficulty Progression (25%)

Completion alone does not distinguish between a student who solved ten easy challenges and one who solved five hard ones. Difficulty progression measures whether a student is growing over the semester by tackling increasingly complex problems. This dimension rewards ambition and learning trajectory, not just volume.

What to track:

-

Average difficulty rating of solved challenges, tracked week over week

-

Ratio of medium and hard challenges to easy ones

-

Whether the student attempted challenges above their current level

This is particularly useful for identifying students who are coasting. A student with a high completion count but a flat difficulty curve is likely replaying familiar territory rather than building new skills. Conversely, a student with fewer completions but a clear upward trajectory in difficulty is demonstrating real growth.

3. Time Efficiency (15%)

In professional cybersecurity, speed matters. Incident response has time-critical windows. Penetration testers work within engagement timelines. Measuring how efficiently students solve challenges provides insight into their fluency with tools and techniques, not just their eventual ability to find the answer.

What to track:

-

Average solve time compared to class median for the same challenge

-

Improvement in solve speed over the semester

-

Time spent on challenges that were ultimately not solved (effort signal)

A word of caution: time efficiency should be weighted lower than completion and difficulty progression. Students who take longer but learn deeply are still succeeding. This dimension is best used as a secondary signal, not a primary one.

4. Breadth of Coverage (15%)

Cybersecurity is a broad field, and employers value professionals who can operate across multiple domains. A student who only solves web application challenges may be strong in one area but lacks the versatility that most roles require. Breadth of coverage measures how many distinct security domains a student has engaged with.

What to track:

-

Number of distinct categories attempted (web security, forensics, cryptography, reverse engineering, network security, OSINT, etc.)

-

Minimum threshold of challenges attempted per category

-

Balance between depth in a primary area and exposure across others

Simulations Labs organizes its challenge library by category, making it straightforward to pull category-level reports for each student. Professors can set minimum coverage requirements, such as attempting challenges in at least four domains, as part of their grading criteria.

5. Write-Up Quality (15%)

This is the only dimension that requires manual evaluation, and it is intentionally included because it captures something the other four cannot: how well a student communicates their methodology. In professional settings, the ability to document findings clearly is just as important as finding them in the first place. SOC analysts write incident reports. Penetration testers deliver engagement summaries. Forensic investigators produce evidence documentation.

What to evaluate:

-

Clarity of methodology description (could someone replicate the approach?)

-

Logical reasoning and hypothesis testing

-

Use of proper terminology and tool references

-

Reflection on what worked, what did not, and what the student learned

Requiring write-ups for a subset of challenges, say three to five per semester, keeps the grading workload manageable while still assessing this critical skill. Students can choose which challenges to write up, which adds an element of self-assessment: they tend to choose the ones they found most interesting or learned the most from.

Putting the Framework into Practice

Setting Expectations Early

Share the rubric on day one of the course. Students perform better when they understand how they will be evaluated. Make clear which dimensions are automated (completion, difficulty, time, breadth) and which require manual submission (write-ups). This transparency also reduces grading disputes later in the semester.

Using Platform Data for Mid-Semester Check-Ins

One of the advantages of CTF-based assessment is that data accumulates continuously. Do not wait until the end of the semester to review it. Use mid-semester check-ins to show students their progress across each dimension. This is where platforms like Simulations Labs add significant value: exportable leaderboard data and per-student analytics make it easy to generate individual progress reports without building custom spreadsheets.

Adapting Weights by Course Level

The suggested weights in the framework above are designed for an intermediate-level course. For introductory courses, consider increasing the weight on completion and breadth while reducing difficulty progression and time efficiency. Beginners need to build foundational exposure before being pushed on difficulty and speed. For advanced or capstone courses, increase the weight on difficulty progression and write-up quality. Advanced students should be tackling harder problems and articulating sophisticated methodologies.

Benchmarking Across Semesters

Over time, this framework generates valuable longitudinal data. You can compare cohort performance across semesters, identify which domains consistently challenge students, and adjust your curriculum accordingly. If every cohort struggles with forensics challenges, that is a signal to invest more classroom time in forensics before the lab component. This kind of evidence-based curriculum refinement is exactly what accreditation bodies want to see.

Addressing Common Concerns

What about cheating?

Flag sharing is a legitimate concern in CTF-based assessment. Several mitigations help: use dynamic flags that are unique per student where the platform supports it, weight write-up quality to catch students who submit flags they cannot explain, and monitor for statistically anomalous patterns like a student suddenly solving five hard challenges in two minutes after weeks of struggling with easy ones.

Is this too much work for professors?

The beauty of this framework is that four out of five dimensions are data-driven and can be pulled directly from the platform. The only manual component is write-up evaluation, and that is limited to a manageable number of submissions per student. Compared to grading dozens of exams or research papers, this approach is often less work, not more, especially when the platform handles data export.

How does this support accreditation?

Accreditation bodies increasingly want evidence of student learning outcomes tied to specific competencies. This framework maps each dimension to measurable outcomes: technical competency (completion), growth (difficulty progression), efficiency (time), versatility (breadth), and communication (write-ups). The data exports from the platform serve as direct evidence for accreditation documentation.

Conclusion

Measuring cybersecurity skills does not have to mean choosing between rigor and practicality. A well-designed grading framework that combines automated platform data with targeted manual evaluation gives professors the tools to assess what actually matters: can this student do the work?

Platforms like Simulations Labs make this framework actionable by providing the challenge library, the analytics, and the export tools that professors need to run CTF-based assessments without building everything from scratch. The result is a grading process that is fairer to students, more informative for employers, and more defensible for accreditation, all while reducing administrative overhead for faculty.

The students who graduate from programs using frameworks like this will have something most cybersecurity graduates do not: a documented track record of hands-on performance that speaks louder than any transcript.

Ready to bring hands-on assessment to your cybersecurity course?

Simulations Labs offers free access for qualifying universities through the Spotlight Program. Visit simulationslabs.com to get started.