The Gap: Why Finding the Right Challenge Is Harder Than It Should Be

Article 2 of 3 — From Library to the Right Match

When 'Plenty of Options' Becomes Its Own Problem

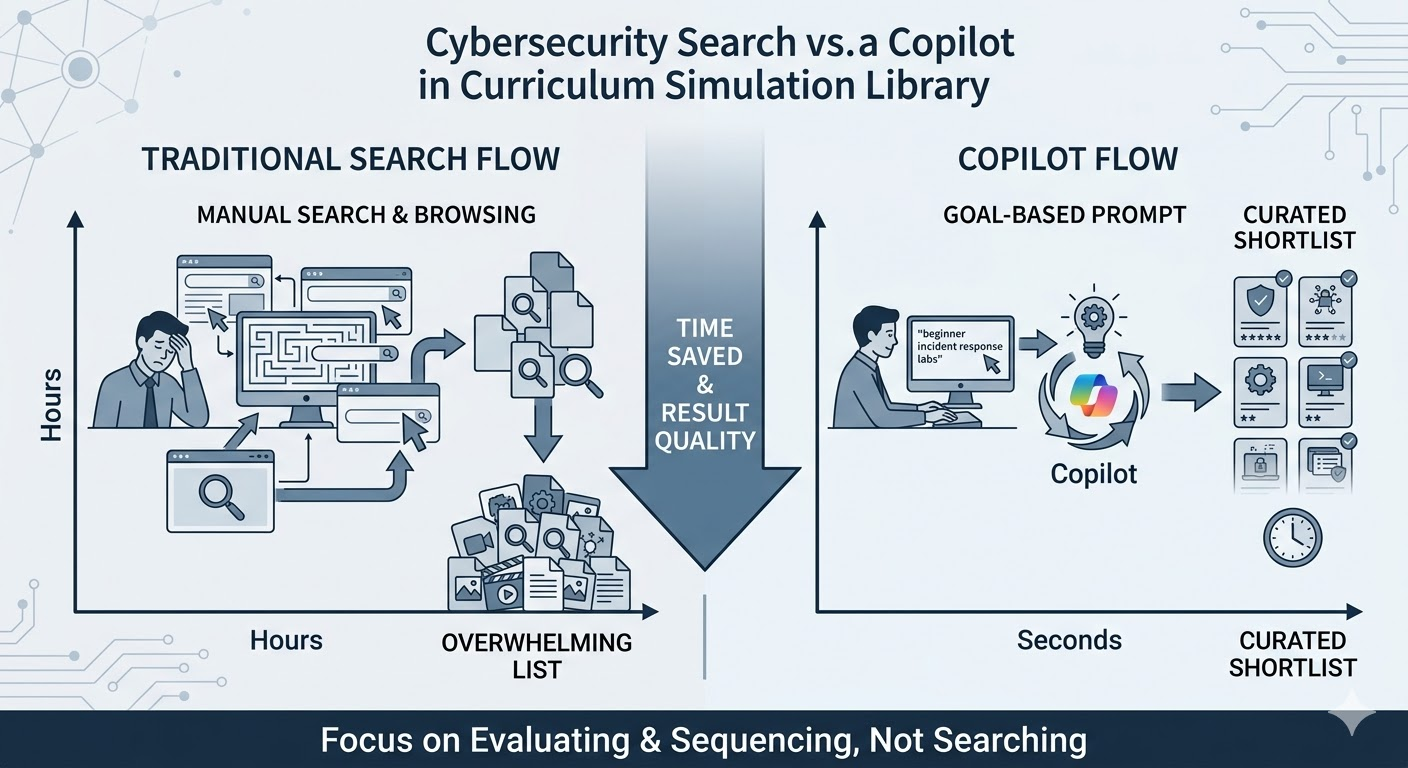

There's a particular kind of frustration that program leaders and cybersecurity instructors know well. You have access to resources. You have content. You know what you're trying to achieve. And you still spend a disproportionate amount of time just trying to figure out which thing to use.

It doesn't seem like a serious problem from the outside. But in practice, it compounds. Every hour spent browsing, evaluating, second-guessing, and settling for 'close enough' is an hour not spent on the work that actually matters — teaching, coaching, building programs, and developing people.

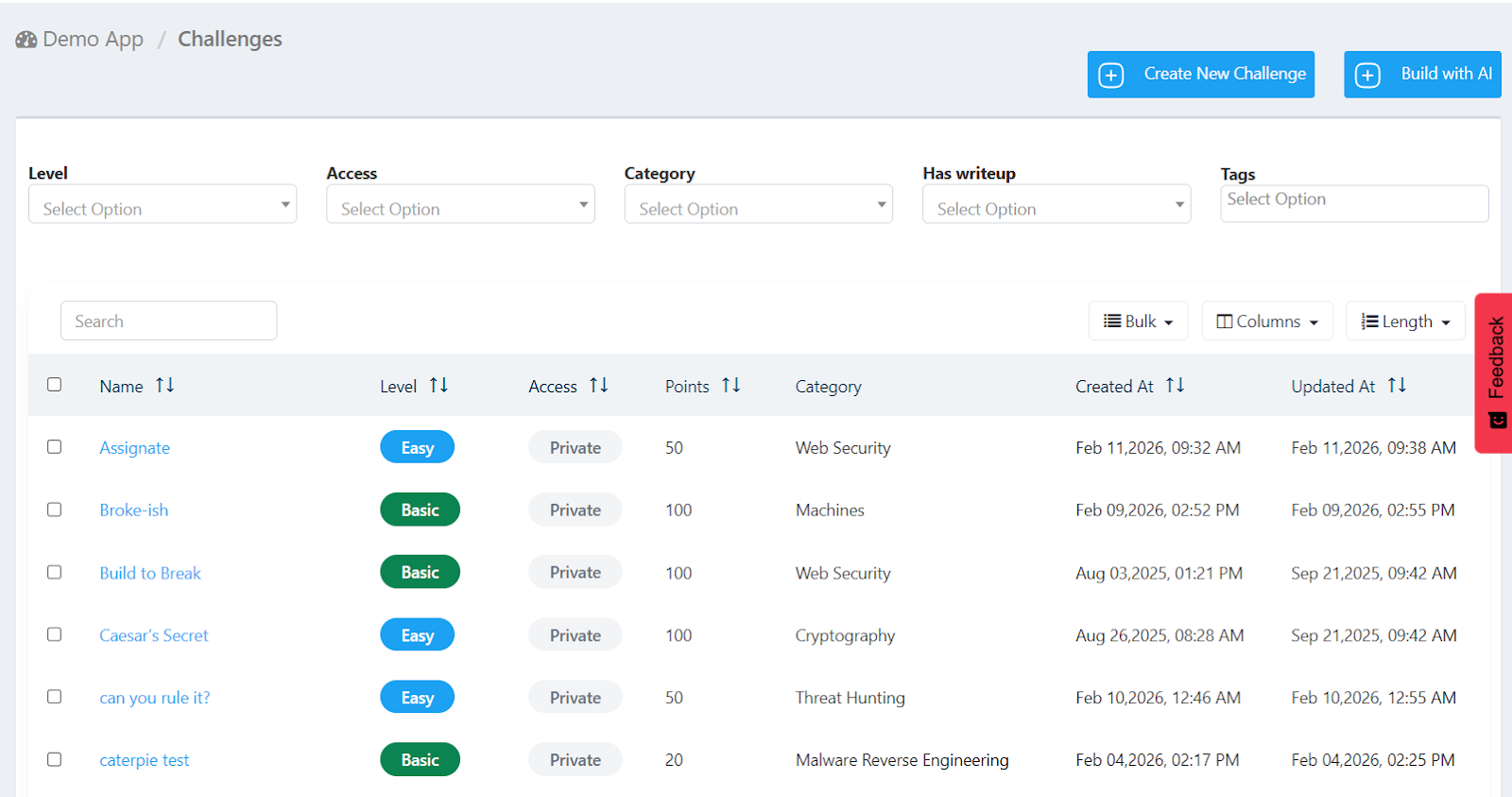

The Simulations Labs challenges library has more than 2,100 scenarios. That's a genuine asset. It's the product of ten years of professional development across every major cybersecurity domain. But it also means that the question of how to navigate it efficiently is real and worth addressing directly.

What the Search Problem Actually Looks Like

Here's a concrete example. A security team leader wants to build a short training track for junior SOC analysts. The focus is threat detection — specifically, recognizing the behavioral patterns that suggest credential abuse or lateral movement. The analysts are relatively new, so difficulty needs to be calibrated carefully. Too easy and there's no development. Too hard and the experience becomes discouraging.

In a well-organized library, this should be straightforward. But 'well-organized' doesn't automatically mean 'easy to search.' Tags help, but tags are broad. Category filters narrow the options, but not always along the dimensions that matter most to the person searching. Role relevance is implicit in challenge design but not always surfaced in metadata. The result is that finding the right set of three or four challenges — out of 2,100 options — can take longer than it should.

Multiply this across an organization with multiple instructors, multiple programs, and multiple learner cohorts, and the cumulative cost becomes significant. Not in a dramatic way, but in the slow, steady drain that makes training programs harder to sustain than they need to be.

Multiply this across an organization with multiple instructors, multiple programs, and multiple learner cohorts, and the cumulative cost becomes significant. Not in a dramatic way, but in the slow, steady drain that makes training programs harder to sustain than they need to be.

Why Traditional Search Falls Short

The fundamental issue with standard search — keyword matching, filter combinations, category browsing — is that it's input-driven. You have to know the right words to get the right results. And in cybersecurity training, the vocabulary is both technical and highly contextual.

Someone searching for 'broken access control' might get results that are technically accurate but pitched at the wrong level, or designed for a different professional role, or part of a category that doesn't match the learning goal. Someone searching for 'challenges for a red team assessment' is expressing something that a keyword filter simply can't parse — because what they mean is nuanced, and the nuance matters.

There's also the problem of unknown unknowns. Instructors don't always know what's in the library. If you don't know that a specific type of scenario exists, you can't search for it. You need something that can meet you where you are — with a rough description of what you need — and surface what fits.

You need something that can meet you where you are — with a rough description of what you need — and surface what fits.

Enter the Simulations AI Copilot

This is the problem the Simulations AI Copilot was built to solve. Not AI as a buzzword. AI as a practical answer to a real operational friction.

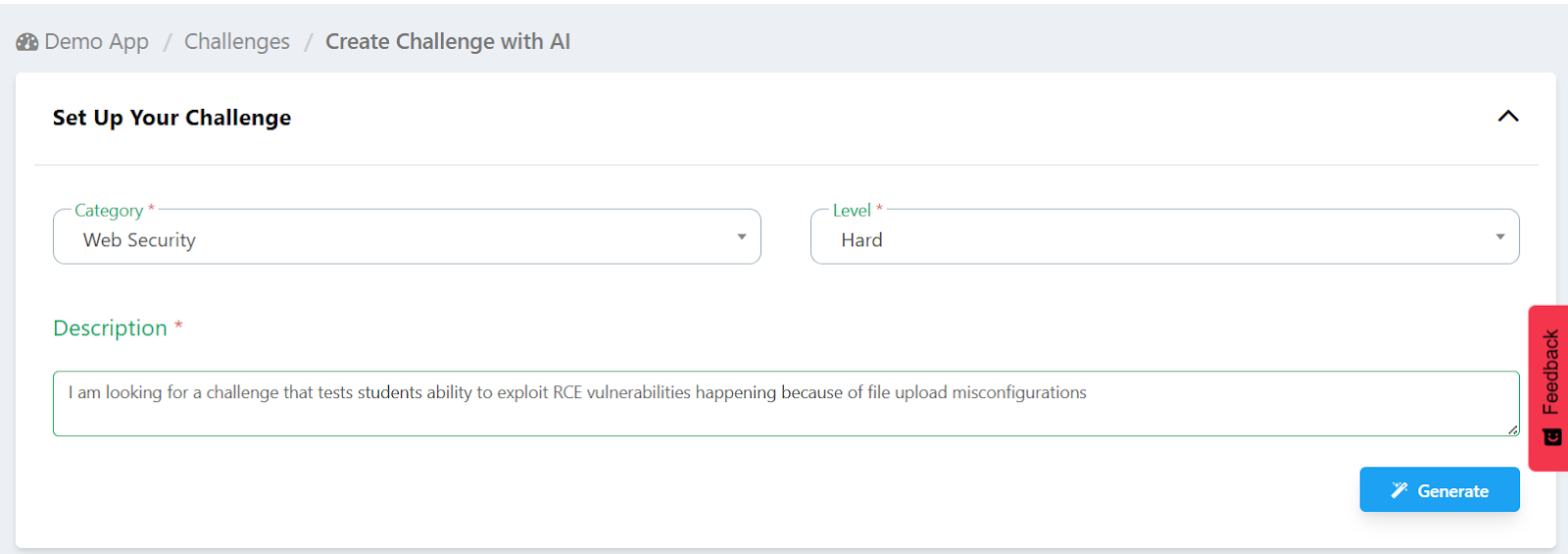

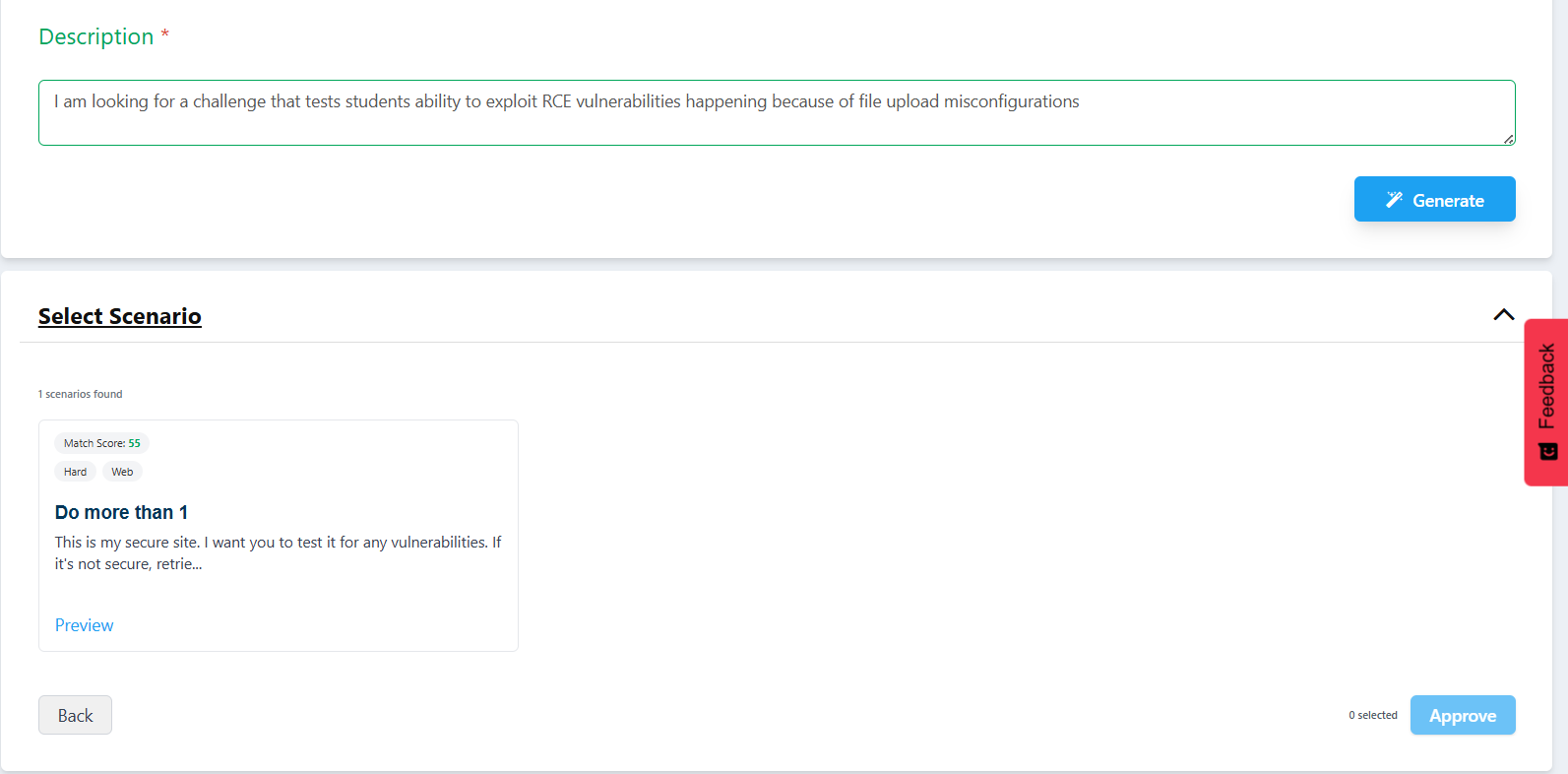

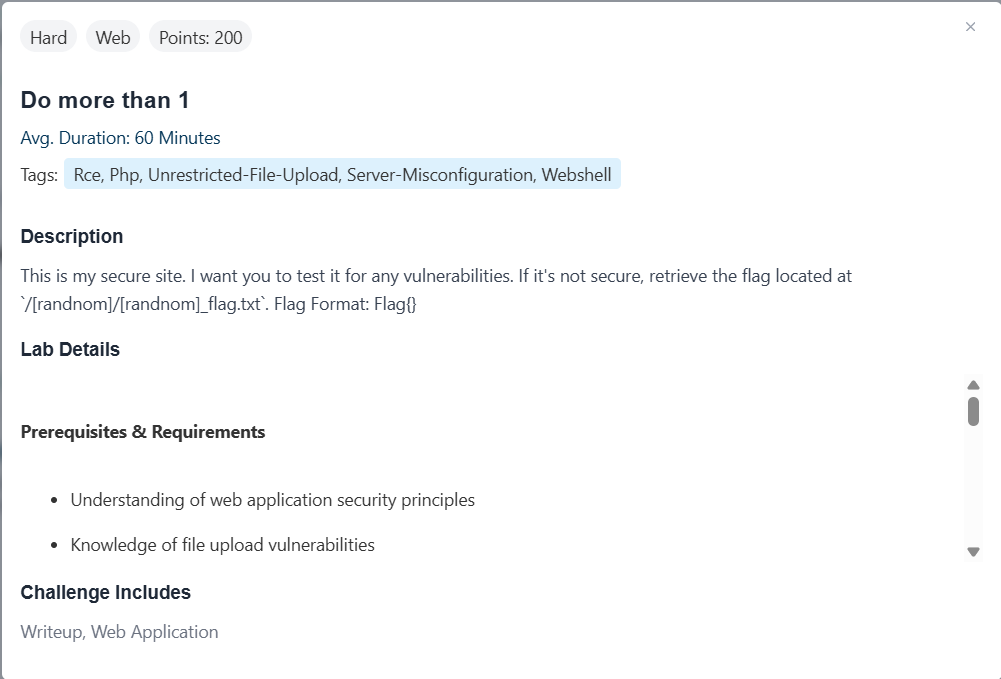

The Copilot is SimulationsLabs' AI-powered feature that sits between you and the library. You describe what you're looking for — in plain language, in your own terms, with as much or as little detail as you have — and it returns the challenges most likely to match what you actually need.

This sounds simple, and in practice it is. But the simplicity is the point. The underlying intelligence is doing the work of translation — taking a human description of a training goal and matching it against the structured attributes of thousands of challenges: their categories, difficulty levels, technical tags, and professional role relevance.

The result is a ranked shortlist, not a long list. The Copilot doesn't return everything that partially matches — it surfaces the best matches for what was described, ordered by relevance. From there, instructors can preview each challenge before selecting it, so the final decision always involves human judgment. The Copilot removes the friction; the person makes the call.

What Changes in Practice

For instructors building curricula

Instead of browsing for hours, you describe the learning goal and get a working shortlist in seconds. You spend your time evaluating and sequencing — the genuinely skilled parts of curriculum design — rather than searching.

For security team leaders

You can scope a training track around a specific skill gap, a job role, or a team profile without needing to understand the full structure of the library first. The Copilot handles the mapping between your goal and the available options.

For community organizers and competition hosts

Building a balanced challenge set for participants at different levels becomes a much faster process. Describe the mix you want — beginner-friendly through advanced, across multiple domains — and the Copilot builds the shortlist for you to confirm.

The Human Element Doesn't Disappear

It's worth being direct about what the Simulations AI Copilot is and isn't. It's a tool for removing friction, not replacing judgment. An experienced instructor still knows things that no algorithm does — how a particular cohort learns, what motivation looks like in a training room, which challenges tend to spark the best discussions, how to sequence difficulty to build confidence without complacency.

The Copilot doesn't replace that. It just means that the instructor isn't spending three hours on a task that should take ten minutes. Good judgment gets more room to operate when it doesn't have to fight through operational drag first.

In the next article, we'll go deeper into how the Simulations AI Copilot actually works — what the AI is doing under the hood, and why that matters for the quality of the matches it returns. For teams that want to understand the tool before trusting it, that piece is for you.

Read the Next Article Now

How the Simulations AI Copilot Actually Works